Anyscale Endpoints, an OpenAI compatible inference API for open-source LLMs, will soon be offering model fine-tuning for Meta’s Llama 2 family of models. Along with opening a waitlist, Anyscale publicly announced that Joe Spisak, who is in charge of open source generative AI and the Llama models at Meta, will be speaking about this new collaboration at the upcoming Anyscale’s Ray Summit, which will be taking place September 18-20 in San Francisco.

Llama 2 is the most recent iteration of Meta’s family of large language models. The models have been trained on 2 trillion tokens and range from 7 to 70 billion parameters. The largest Llama 2 model (Llama-2-70B) currently ranks just behind the TII’s Falcon-180B in the HuggingFace Open LLM Leaderboard at the pretrained model category. Developers are currently able to integrate the whole family of Llama 2 models into any application via Anyscale Endpoints. This is already an exciting possibility, since Anyscale’s scalable technology makes the size of the application less of a concern. Additionally, Anyscale Endpoints allows developers to choose whether they want to switch from closed models to open ones or simply be able to work with open models along closed ones.

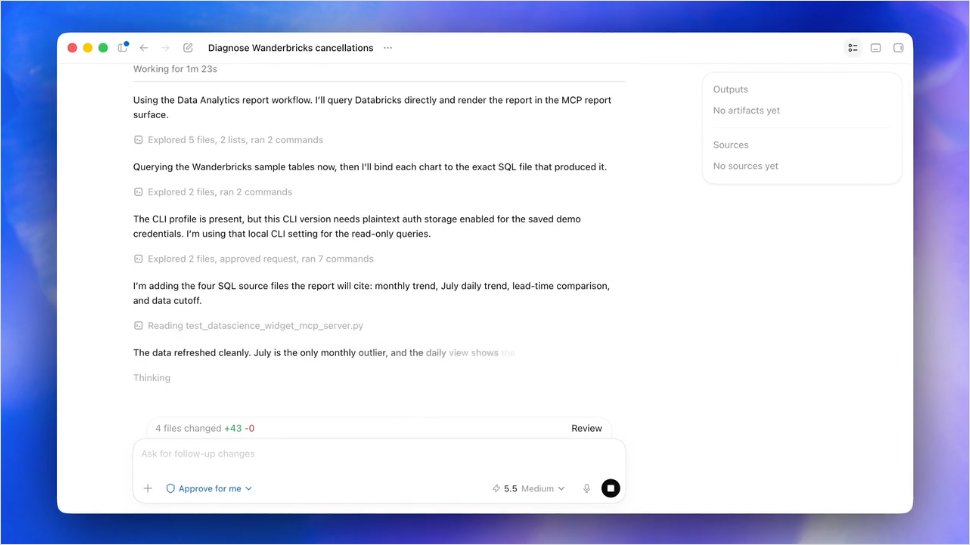

The newest development in Anyscale’s joint research with Meta, from which we’ll presumably know more about in Joseph Spisak’s session at Ray Summit, is the result that Meta’s smallest model, Llama-2-7B, outperformed closed model GPT-4 when fine-tuned on some problems like SQL query generation. From a cost-effectiveness point of view, this is a very impressive advancement, since it could represent the possibility of trading costly, large-sized closed general purpose models for smaller open source fine-tuned LLMs.

You can read Anyscale’s official announcement here.

Comments