Data Phoenix Digest - ISSUE 7.2024

Explore recordings of all Data Phoenix past webinars and AI highlights of the latest week.

Explore recordings of all Data Phoenix past webinars and AI highlights of the latest week.

AI Index Report, Llama 3, Idefics 2, Qdrant Hybrid Cloud, Deploying LLMs Into Production Using TensorRT LLM, Neural Network Diffusion, Intro to DSPy, LLM Evaluation at Scale with the NeurIPS Large Language Model Efficiency Challenge, T-RAG, Direct-a-Video, EMO, DistriFusion and more.

Dive down the AI Rabbit Hole and journey through the wonderland of artificial intelligence at the upcoming conference on April 19th. Inspired by the timeless tale of Alice in Wonderland, this event promises to be a whimsical exploration of the latest advancements in AI technology.

Join the Data Phoenix webinar, where Dmytro Spodarets and guests Greg Loughnane (Co-Founder & CEO of AI Makerspace) & Chris Alexiuk (Co-Founder & CTO at AI Makerspace) will discuss how to build an RAG application using fine-tuned domain-adapted embeddings.

We are a fast-growing startup, and we are looking for a Middle+/Senior Machine Learning engineer to join our team.

We are seeking enthusiastic ML Engineers to join our team. This is a great opportunity for those looking to deepen their expertise in machine learning and contribute to various innovative AI projects 🤖

This role involves designing, implementing, and improving retrieval-augmented generation (RAG) and agent LLM-based systems based on private (OpenAI) and open-access (Llama-2, Gemma) models.

NVIDIA's Omniverse Cloud APIs enable developers to integrate RTX rendering and OpenUSD into their software tools and simulation workflows. Industry leaders worldwide leverage the Omniverse Cloud APIs to enhance their workflows and deliver solutions for the development of digital twin simulations.

The newly launched NVIDIA microservices enable organizations to build and deploy AI applications locally while preserving control of their data. NIM supplies high-performing containers for proprietary and open model deployment while CUDA-X accelerates the production process.

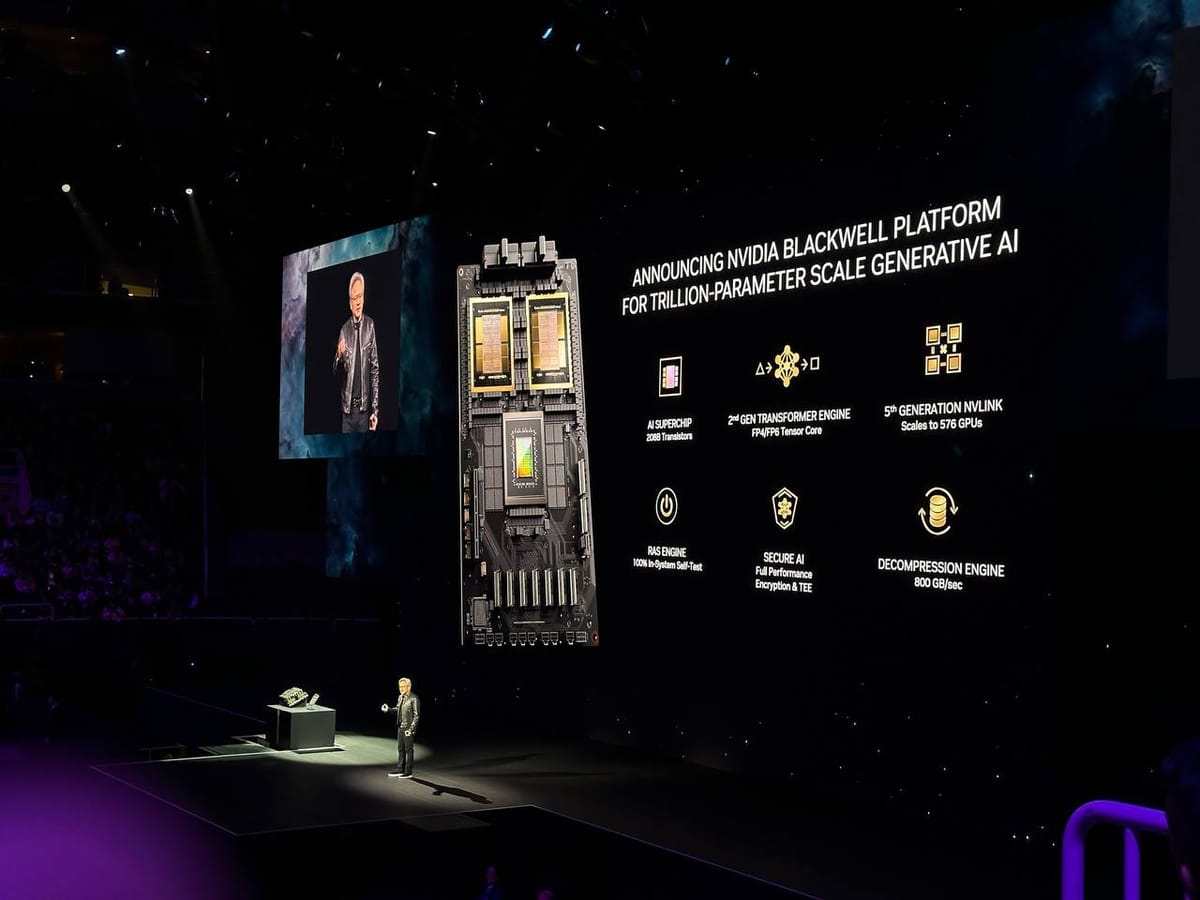

NVIDIA announced its new Blackwell architecture at the GTC keynote. Blackwell is the Hopper architecture successor, featuring a 30x performance increase and a 25x cost and energy consumption decrease when training or performing real-time inference for LLMs of up to 10 trillion parameters.