VIDEO

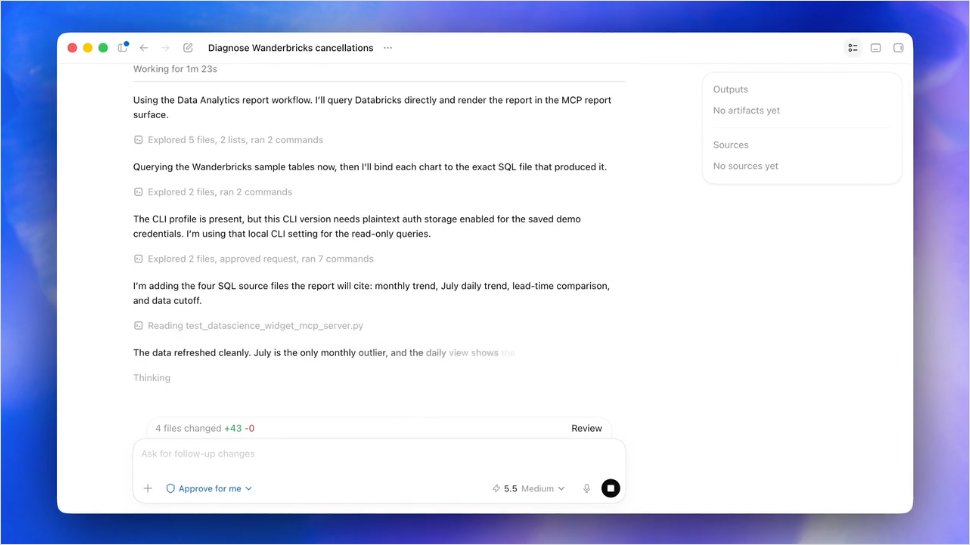

dstack – a command-line utility to provision infrastructure for ML workflows

Video recording of our webinar about dstack and reproducible ML workflows by Andrey Cheptsov.

If you have interesting topics or projects that you would like to share with the world in our webinars, you can submit them here.

ARTICLES

In this article, the author described AVL trees and operations you can perform on them, such as inserting a node in different variants (for example, left, right or right, right).

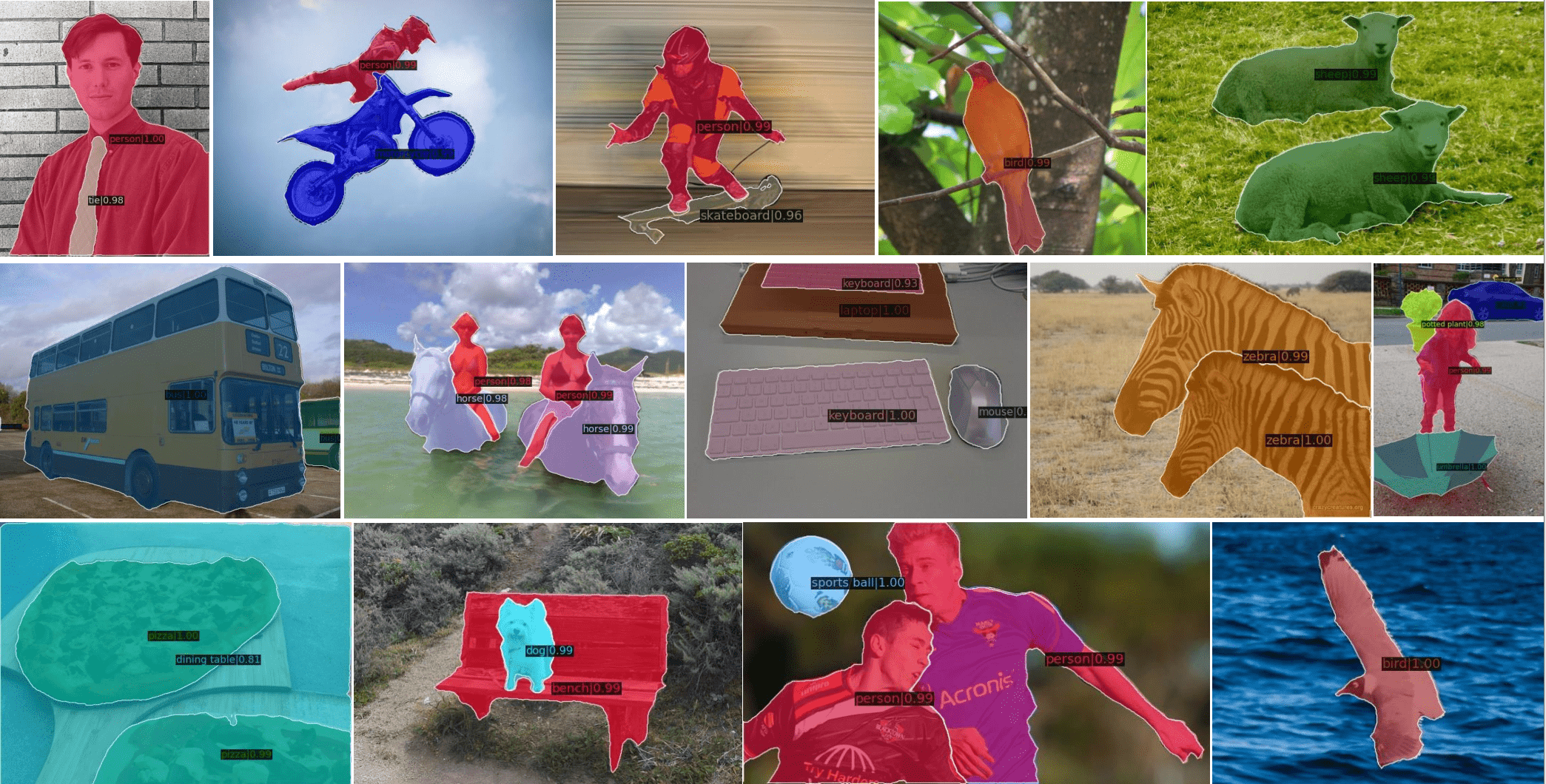

Ultralytics YOLOv8: State-of-the-Art YOLO Models

YOLOv8 is the latest family of YOLO based Object Detection models from Ultralytics providing state-of-the-art performance. This article looks into the latest improvements and features added to YOLOv8, and provides a guide on using it in practice.

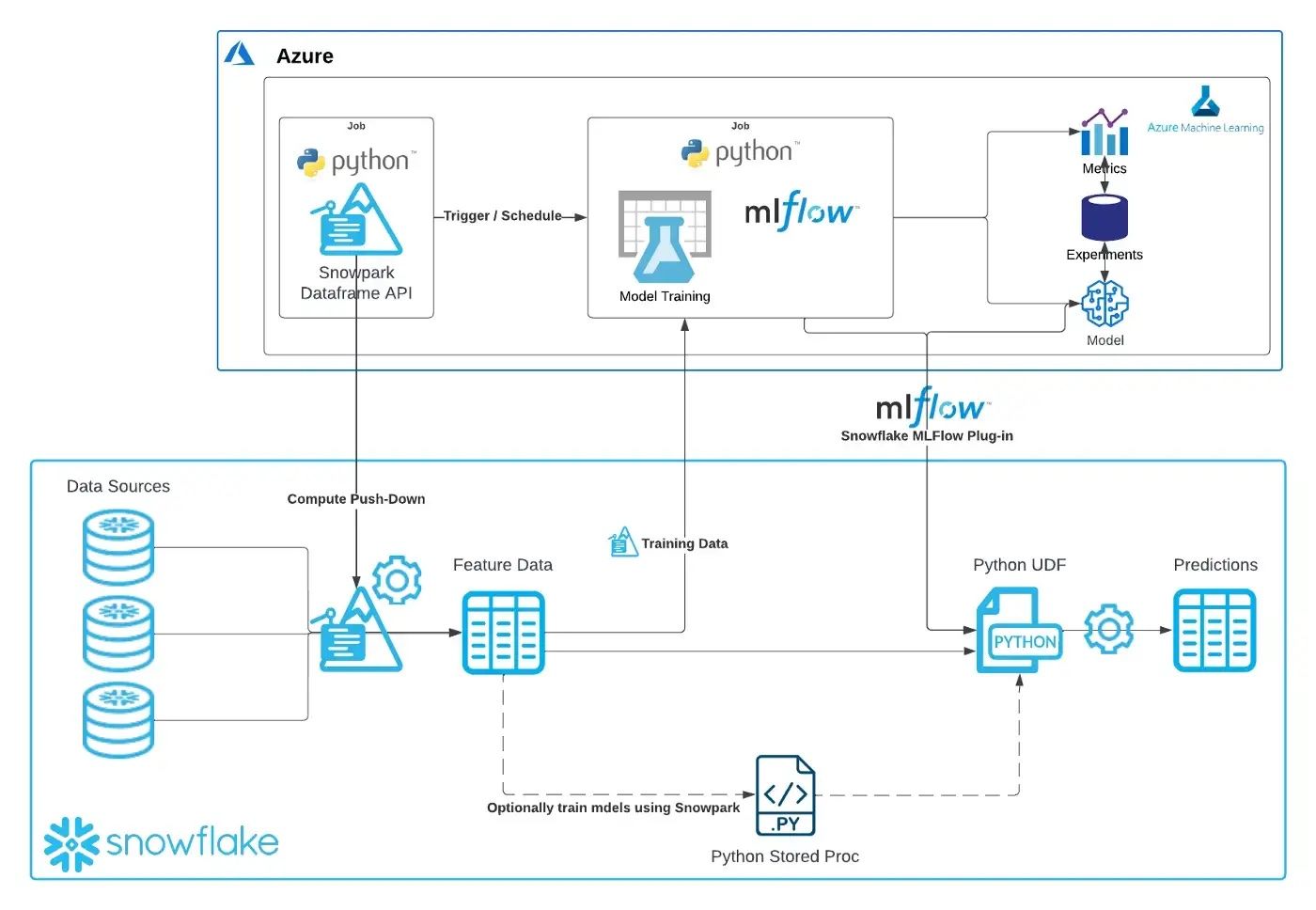

End-to-End MLOps with Snowpark Python and MLFlow

How would you leverage Snowpark Python for operationalizing your machine learning models within the flow of your existing MLOps processes? The article provides detailed answers and looks into end-to-end MLOps, from A to Z.

Best practices for load testing Amazon SageMaker real-time inference endpoints

In this post, the authors described how you could load test your SageMaker real-time endpoint. They also discussed what metrics you should be evaluating when load testing your endpoint to understand your performance breakdown.

Training XGBoost with MLflow Experiments and HyperOpt Tuning

The author claims that it is easiest to start with MLOps during the experimentation phase (model tracking, versioning, registry). It’s lightweight and highly configurable which makes it easy to scale up and down. Check out the article to learn more about his perspective!

Productionize Machine Learning Models with Serverless Container Services

This article goes deep into the specifics of using serverless container services for machine learning. Specifically, it explains how to create serverless containerized inference end-point for your ML models with Azure Container App. Learn more!

Introduction to Forecasting Ensembles

Ensemble forecasting is a method used in or within numerical weather prediction. It gives the forecaster a much better idea of what weather events may occur at a particular time. Assuring its performance may be problematic, though. Here’s a cheap trick to boost it.

Building a Predictive Maintenance Solution Using AWS AutoML and No-Code Tools

Industrial machine, equipment, and vehicle operators need to reduce maintenance costs while operating under strict constraints. This article presents a predictive maintenance solution built using AutoML and no-code tools powered by AWS. Check it out!

PAPERS & PROJECTS

Zero-Shot Text-Guided Object Generation with Dream Fields

Dream Fields can generate the geometry and color of a wide range of objects without 3D supervision. It combines neural rendering with multi-modal image and text representations to synthesize diverse 3D objects solely from natural language descriptions. Take a look!

AgileAvatar: Stylized 3D Avatar Creation via Cascaded Domain Bridging

AgileAvatar is a novel self-supervised learning framework to create high-quality stylized 3D avatars with a mix of continuous and discrete parameters. To ensure the discrete parameters are optimized, a cascaded relaxation-and-search pipeline is implemented.

RoDynRF: Robust Dynamic Radiance Fields

In this work, the authors address the robustness issue of dynamic radiance field reconstruction methods by jointly estimating the static and dynamic radiance fields along with the camera parameters (poses and focal length). Learn how they do it!

Box2Mask: Box-supervised Instance Segmentation via Level-set Evolution

Box2Mask is a novel single-shot instance segmentation approach, which integrates the classical level-set evolution model into deep neural network learning to achieve accurate mask prediction with only bounding box supervision. Check the paper out!

X-Decoder: Generalized Decoding for Pixel, Image and Language

X-Decoder is a generalized decoding pipeline that can predict pixel-level segmentation and language tokens seamlessly. X-Decoder is the first work that provides a unified way to support all types of image segmentation and a variety of vision-language (VL) tasks. Learn more!

Domain Expansion of Image Generators

The authors propose a new task - domain expansion - for injecting new concepts into an already trained generative model, while respecting its existing structure and knowledge. Check out how it works and learn why it may be a breakthrough!

Muse: Text-To-Image Generation via Masked Generative Transformers

Muse is a text-to-image Transformer model that achieves state-of-the-art image generation performance while being significantly more efficient than diffusion or autoregressive models. Muse is efficiently trained to predict randomly masked image tokens.

If you enjoyed this content make sure to subscribe to our newsletter and share it with others who may be interested. Follow us on social networks (Telegram, Facebook, Twitter, LinkedIn, YouTube) to stay updated about the upcoming webinars and have more interesting content.

For collaboration inquiries, including sponsoring one of the future newsletter issues, please contact us here.

mlrch-feca28e742185bbb

Comments