Chinese AI startup DeepSeek recently released the 1.6-trillion-parameter open-source DeepSeek-V4. Reportedly, this model approaches (although not quite matches) the performance of leading offerings from closed-source AI labs, like GPT-5.5 and Claude Opus 4.7.

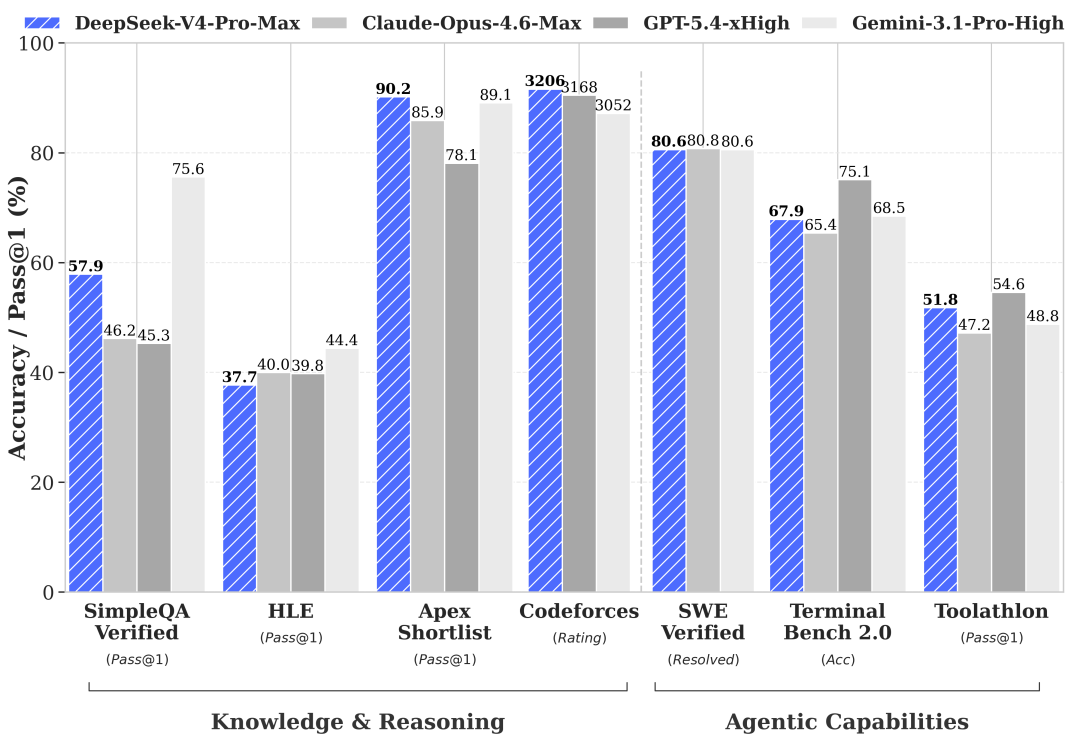

In most of the reported benchmarks, DeepSeek-V4's scores at its maximum effort (DeepSeek-V4-Pro-Max) trail behind one or both of these models' by less than a 5% difference, an impressive feat for an open-source model that is offered via API at approximately one-sixth to one-seventh of the cost of either. On BrowseComp, it scored 83.4%, nearly matching GPT-5.5's 84.4% and beating Claude Opus 4.7's 79.3%. On Terminal-Bench 2.0, it reached 67.9%, competitive with Claude's 69.4% but behind GPT-5.5's 82.7%.

Released under the permissive MIT License, DeepSeek-V4-Pro is priced at $1.74 per million input tokens and $3.48 per million output tokens, totaling $5.22 for a standard comparison—compared to $35 for OpenAI's GPT-5.5 and $30 for Anthropic's Claude Opus 4.7. With cached input, the combined cost for DeepSeek-V4-Pro drops to $3.625. Alternatively, the smaller and faster DeepSeek-V4-Flash is on offer at $0.42 (combined), which drops to around $0.30 with cached input.

DeepSeek-V4 is the result of some outstanding technical achievements: the model features a native one-million-token context window requiring only 10% of the key-value cache and 27% of the inference compute compared to its predecessor. This was enabled by innovations including Compressed Sparse Attention, Heavily Compressed Attention, and Manifold-Constrained Hyper-Connections for signal stabilization.

It should also be noted that the development of DeepSeek-V4 wasn't entirely reliant on Nvidia hardware. DeepSeek validated its architecture on Huawei Ascend NPUs, although it also reports it still used fully licensed Nvidia GPUs for model training purposes. While it remains to be seen whether these claims hold in real-world use, the fact that DeepSeek validated its model architecture on non-Nvidia hardware remains significant, and has important implications for the development of sovereign AI that is also cost-effective to run.

Comments