Last month, Google DeepMind released Gemma 4, its most capable open-source model family to date, under an Apache 2.0 license. The announcement implies that the decision to launch Gemma 4 under such a permissive license comes after considering the feedback from the open AI community, which has downloaded Gemma models over 400 million times since the first generation launch and has built over 100,000 variants, compiled in the cleverly-named Gemmaverse.

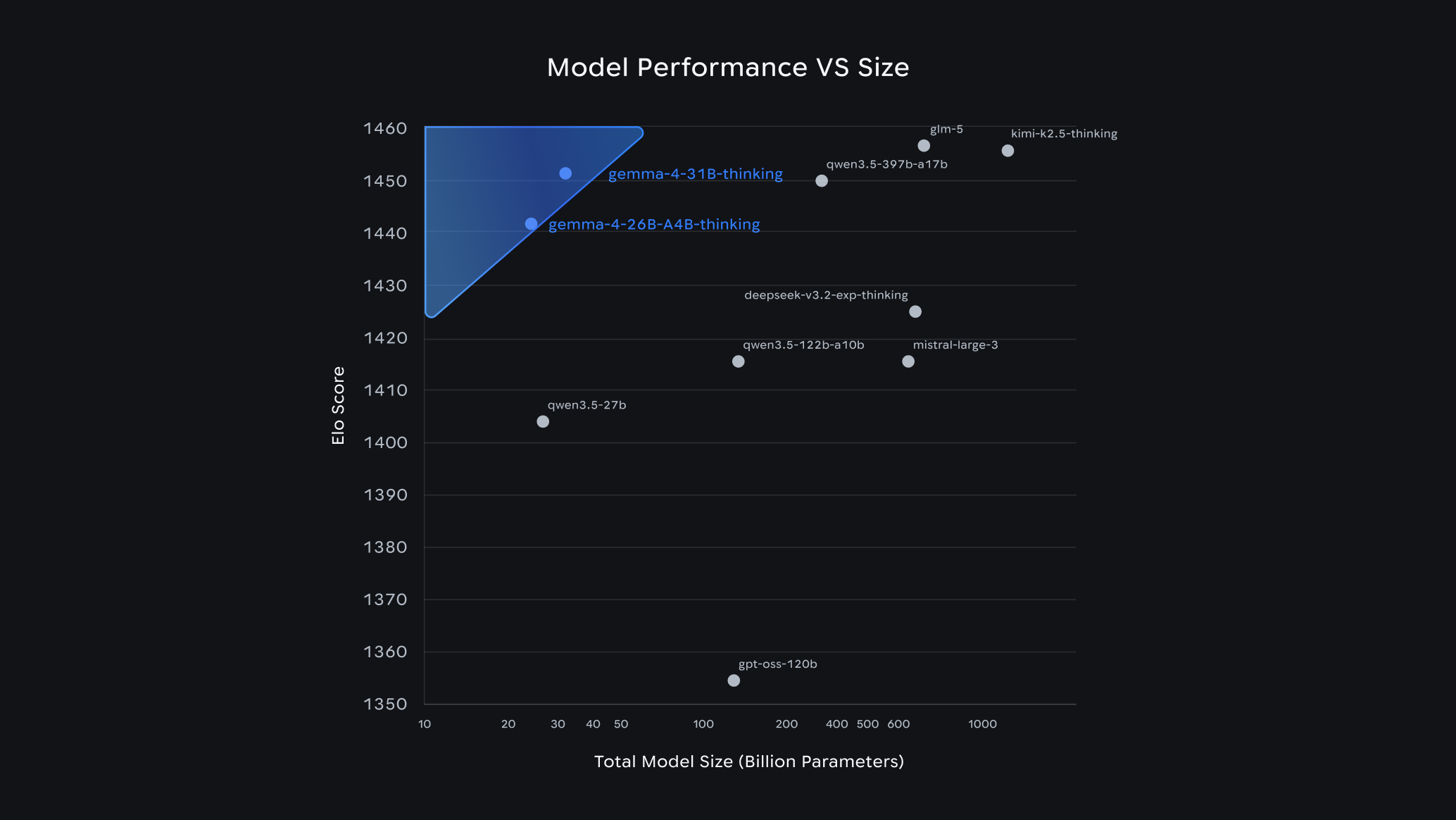

Gemma 4 arrives in four sizes: Effective 2B (E2B), Effective 4B (E4B), 26B Mixture of Experts, and 31B Dense. The 31B model ranked #3 globally on Arena AI's text leaderboard at launch, while the 26B secured the #6 spot—outcompeting models 20 times their size. Built on the same technology as Gemini 3, the models excel at advanced reasoning, agentic workflows, and code generation. All variants support multimodal inputs including vision and audio, with context windows reaching 256K tokens and native training across 140+ languages.

The edge-focused E2B and E4B models run completely offline on mobile devices and IoT hardware, enabling near-zero latency applications. These models power autonomous, multi-step agentic workflows entirely on-device through Google's AI Edge Gallery and LiteRT-LM framework. The E2B model requires less than 1.5GB memory on some devices, while supporting features like constrained decoding for structured outputs and dynamic context handling across CPUs and GPUs. LiteRT-LM processes 4,000 input tokens across multiple skills in under three seconds, with performance reaching 3,700 prefill and 31 decode tokens per second on Qualcomm Dragonwing processors.

Google optimized the larger models to run on accessible hardware, with unquantized weights fitting on a single 80GB NVIDIA H100 GPU.

Comments