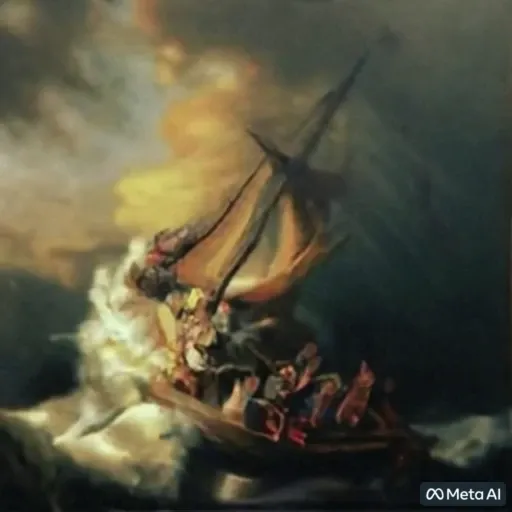

Following the announcement earlier this year of Make-A-Scene, a multimodal generative AI method that gives people more control over AI-generated content and allows them to create photorealistic illustrations and storybook-level artwork using words, lines of text and free-form sketches, Meta has introduced a new artificial intelligence system that will open new possibilities for creators and artists - Make-A-Video. Text cues can now be turned into short, high-quality video clips.

Make-A-Video is based on Meta AI's latest advances in generative research. With just a few words or lines of text, it can bring the imagination to life and create unique videos full of vivid colors, characters and landscapes. The system uses images with descriptions to learn what the world looks like and how it is often described, and uses unlabeled videos to learn how the world moves. Using this data, Make-A-Video lets your imagination come alive by creating whimsical, one-of-a-kind videos with just a few words or lines of text. The system can also create videos from images or take existing videos and create new ones that look like each other.

In this way, using publicly available datasets gives Make-A-Video's research an extra level of transparency, advancing creative expression by giving people the tools to create new content quickly and easily.

This coolly complements the capabilities of the DALL-E neural network, which can create images based on text descriptions. It has already taken off the beta waiting list and users can now start using it at any time.

Comments