Use of AI-generated Characters for Different Fields

To boost the potential of the technology, the researchers at MIT Media Lab and their colleagues at the University of California at Santa Barbara and Osaka University have built an open-source, convenient character generation pipeline that integrates AI models for facial gestures, voice, and motion. It can be used to create a variety of audio and video outputs.

The pipeline also connects the resulting production to a traceable, comprehensible watermark to differentiate it from original video content, and to demonstrate how it was created.

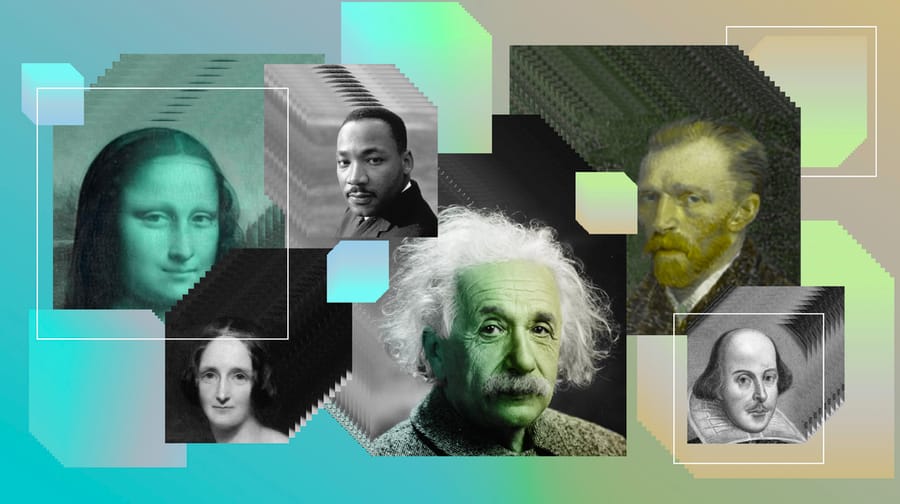

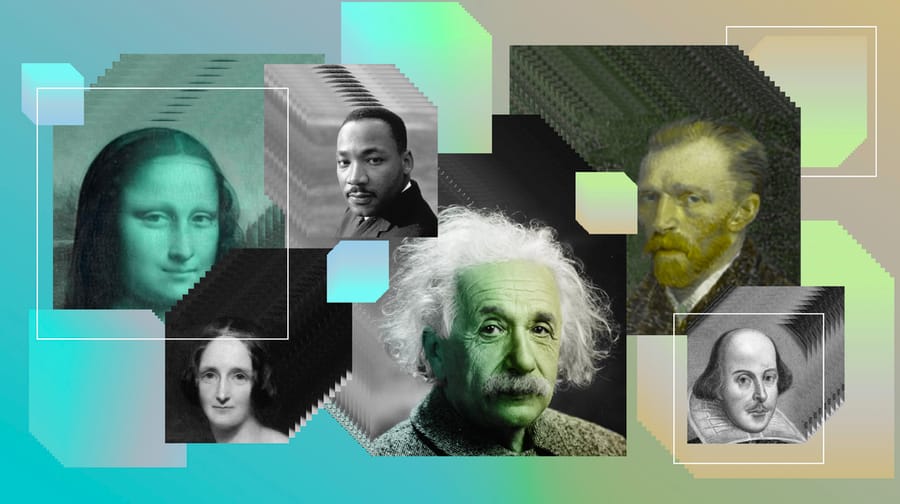

The technology itself suggests a way for instruction to be customized for your interest, context, idols, and possibility for change over time.

Mistral AI acquired Austrian startup Emmi AI, which specializes in physics-based AI models for industrial engineering simulations, to build a comprehensive AI stack for aerospace, automotive, and semiconductor manufacturers.

Anthropic acquired Stainless, a developer tools startup that automates SDK generation to strengthen Claude's agent connectivity.

OpenAI launched personal finance tools in ChatGPT for U.S. Pro subscribers, allowing users to connect bank accounts from over 12,000 institutions via Plaid and receive AI-powered financial guidance using GPT-5.5's reasoning capabilities grounded in their actual spending and investment data.

Cambridge-based Tolemy Bio raised €1.4 million in pre-seed funding to develop Orbit, an AI-native platform that integrates fragmented cell biology data and virtual cell models to help biopharma teams optimize therapeutic development and manufacturing processes.

Anthropic launched an expanded suite of legal AI tools featuring over 20 MCP connectors for Claude that enable it to connect to platforms like Thomson Reuters and DocuSign, plus 12 practice-area plugins designed to assist with specific legal work.

Data Phoenix is a live media platform for AI and Data professionals, covering technologies under the hood, best practices, and live demos from the builders shaping the industry, via original shows.

Copyright © 2026 Data Phoenix. Published with Ghost and Data Phoenix.

Privacy Policy | Terms of Service | Cookie Preferences

Comments