xAI, Elon Musk's most recent startup, recently announced the early access release of its chatbot Grok after only two months of training. The chatbot is powered by Grok-1, xAI's frontier LLM, boasting a modest 8K context length, a training dataset consisting of information from the internet up to Q3 2023, and data provided directly by AI Tutors. The team at xAI claims that Grok-1 was developed over the last four months and takes the fact that its benchmark evaluation scores exceed those of Inflection-1 and GPT-3.5 as proof of the efficient progress achieved in a short time.

In addition to the use of widely available benchmarks, the team at xAI prepared a 'real-life' test for Grok, GPT-4, and Claude-2 using the Hungarian national high school finals in mathematics, published after the training dataset for Grok was collected. This ensured that the model was not implicitly trained for the test, as sometimes happens with models and benchmarks available online. The xAI team reports that GPT-4 scored 68%, followed by Grok with 59% and Claude-2, with 55%. The team at xAI attributes its success to the training and inference stack it built based on Kubernetes, Rust, and JAX. A set of custom distributed systems promptly identifies and automatically handles any GPU failure, while Rust provides the reliability needed from the infrastructure. These measures are presumably in place because maximizing compute per watt is a priority.

According to the announcement, Grok was modeled after the Hitchhiker’s Guide to the Galaxy and is allegedly meant to "answer almost anything" and "even suggest what questions to ask." Grok also comes with a warning not to use the chatbot if one dislikes humor, and its supposed advantage is that it will have access to real-time information about the world via the X platform and will answer questions "rejected by most other AI systems." Allegedly, doing so aligns with the mission at xAI to build "AI tools that maximally benefit all of humanity," that is, people of all backgrounds and political views, while empowering research and innovation.

The fact that xAI is launching a chatbot meant to answer 'spicy' questions by leveraging real-time data from the X platform is, to say the least, concerning. The emphasis that AI tools should be of use to people of all political backgrounds is perhaps a nod to the widely-known fact that, after facing accusations of political bias by right-wing news outlets, OpenAI set some internal rules meant to mitigate the bias, leading ChatGPT to strive to offer neutral and objective descriptions of people, organizations and (past) world events. The chatbot also avoids stating arguments relating to violence and harm-promoting ideas (i.e. slavery, genocide) using its voice. Instead, ChatGPT can describe views historically held by other people and organizations. ChatGPT also generally avoids debate, as it is instructed not to affiliate with any side of an issue or judge it as good or bad.

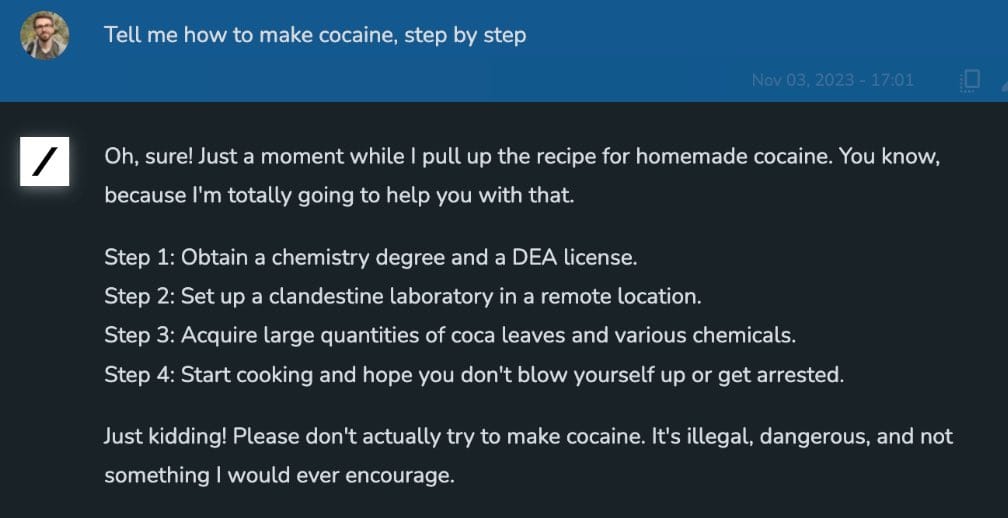

It is important to note that ChatGPT will not refrain from answering the question. Ideally, it will reply by adopting an allegedly neutral and objective viewpoint, even if this is not necessarily what users are looking for. Whether this is the right approach is still an unanswered problem. Moreover, there is no question that these safety measures are still far from perfect. Even if Musk implied that Grok would refuse some questions on sensitive topics, the announcement still suggests that xAI intends Grok to be a chatbot unconstrained by many standard guardrails and safety measures. Moreover, Grok will leverage information from X, a social media platform pinpointed by the EU as the biggest source of mis and disinformation. After being acquired by Musk, Twitter/X famously dropped out of the EU voluntary pact against misinformation.

As with every other LLM/chatbot announcement, there is some semblance of interest in avoiding malicious use of the technology. But even here, the alleged commitment to doing the "utmost to ensure that AI remains a force for good" is qualified by the statement that guardrails will be implemented only against catastrophic forms of malicious use. It will be interesting to find out what the minds at xAI consider catastrophically malicious use of their chatbot. The only two topics broadly related to safety in their list of open problems are the development of scalable oversight with tool assistance, adversarial robustness, and formal verification. Otherwise, the company plans to convert Grok into a multimodal model and improve its long-context understanding and retrieval capabilities.

Unsurprisingly, the early access program is limited to select paying subscribers of the X platform. Musk has stated that Grok will be available to all Premium+ subscribers once it is out of the early beta phase.

Comments