GitOps is a DevOps paradigm that helps teams ship software more quickly by applying things like version control, collaboration, compliance, and CI/CD. In ML, GitOps is still at the trial stage and isn’t adopted at scale yet.

This post sums up some of the ideas around GitOps and ML that we’ve been working on with dstack. You may find this post relevant if you’re curious about productizing ML models and the dev tooling around it.

GitOps and ML workflows

Fixing terminology is a good starting point. A workflow is a common way to refer to a group of tasks that are done on a regular basis in order to achieve a goal. ML workflows may include exploring data, processing data, running experiments, running apps, and deploying apps, to name a few.

Workflows vary depending on where they are performed. To run experiments, you may want to use an IDE or a Jupyter notebook. To prepare data and train models, you may want to use a cron scheduler, or trigger it from your CI/CD pipelines. You may also want to trigger ML workflows programmatically, e.g., based on certain business events in your application.

ML workflows and traditional software development have a lot of common ground. Both involve writing and versioning code, collaboration, and both end up with apps deployed into production. But this is where the similarities end.

Problem 1: Friction between roles

ML workflows span two major phases: experimentation and productizing. And the problem is that these phases typically involve different roles and approaches. When it comes to productizing the results of an experiment, the solution often has to be reworked from scratch.

The worst scenario is when these phases are done by different teams. Teams that use different tools and follow different practices.

Problem 2: Versioning data & models

Unlike traditional development workflows, ML deals with data and models as workflow artifacts which are more difficult to version.

Don't be confused. Today, there are many tools that help manage version data and models. However, because of the lack of industry standards, many different data management approaches coexist.

How do you set up experimentation and development, so data and models are versioned and easy to reuse across teams and different scenarios?

Take Git for example. It’s both vendor and use-case agnostic. It’s possible to use it across different scenarios and it doesn’t need much effort to manage (especially given the availability of such services as GitHub, GitLab, etc).

Versioning data and models is not a question of tools. It's a question of a ubiquitous and universal approach.

Problem 3: Workflow orchestration

In traditional development, we have CI/CD for building software, and Infrastructure-as-Code for deploying it.

When it comes to ML, there are many subtle aspects that don’t allow the traditional tools to work efficiently: GPU acceleration, use of spot cloud instances, handling large data, distributed workflows, etc.

While there are plenty of tools that solve each of these aspects individually, there is no sufficiently generic approach that would support multiple use cases.

Introduction to dstack

We build dstack to solve these challenges.

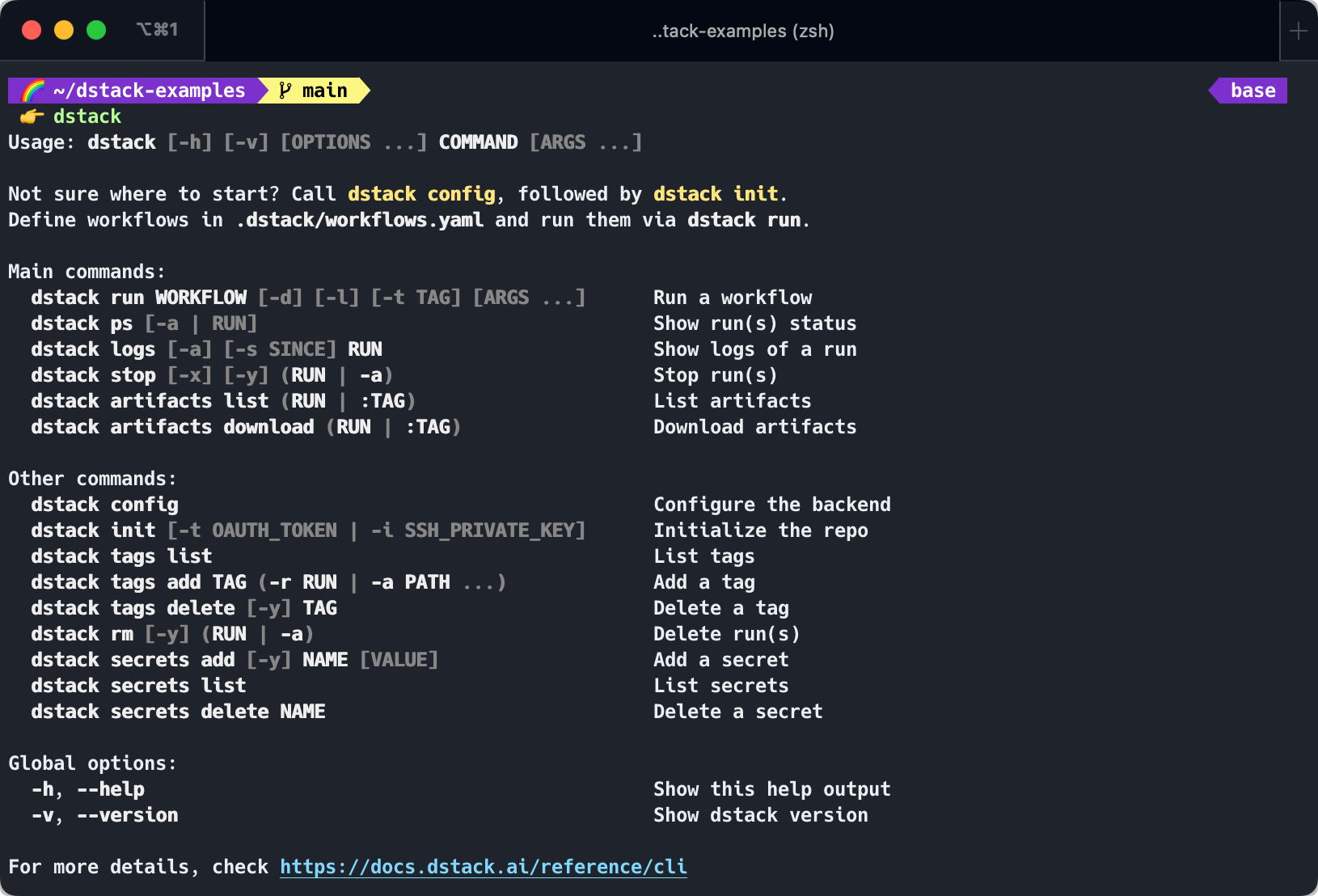

dstack is an open-source lightweight tool that allows defining ML workflows as code and running them directly in a configured cloud, – all while taking care of dependency management and artifact versioning.

Further in this post, I’d like to look at some of the features of dstack designed to drive the key principles of GitOps in ML.

Version control and CI/CD

dstack allows ML workflows to be defined as code. When you run a workflow, it tracks the current revision of the repository (incl. the local changes that you haven’t committed). This makes it possible to use dstack from both an IDE and your CI/CD pipeline.

dstack works directly with the cloud. Just like with Terraform which implements Infrastructure-as-Code, dstack implements a similar principle called Workflows-as-Code. To use dstack you only need Git and Cloud credentials.

For versioning data, dstack doesn’t use Git. Instead, it stores the output artifacts of each workflow. Below, I’ll explain how it works.

Collaboration

Artifacts are first-class citizens with dstack. Output artifacts can include prepared data, a trained model, or even a pre-configured Conda environment.

Artifacts are stored in the configured immutable S3 storage and are identified via the name of the corresponding run. To reuse artifacts across multiple workflows and teams, you can assign tags to finished runs, and use these tags to refer to a particular revision of output artifacts.

As long as teams are using the same S3 bucket, they may reuse artifacts of each other across different repositories.

The major central idea behind dstack is reproducibility. All workflows, including those that are interactive, are defined as code. The tool encourages the use of Git, Python script, Python packages, and versioned data from day 1.

Because workflows are designed in a reproducible manner at the experimentation stage, the need to involve other roles, such as MLOps engineers, is eliminated to a great extent.

About us

dstack is 100% open-source, so your contribution is very much appreciated. You can help with improving the documentation, adding more examples, fixing issues, or adding new features.

The current version of dstack is just the beginning. In fact, we have a grand vision and plan to work to make it real. Now, as more teams start to use dstack, we get a lot of feedback and plan to grow our team to address it, improve the stability, and add missing things.

Our team is passionate about dev tools and dev productivity. If you feel the same, and would like to collaborate, please let us know! For feedback and questions, please reach out to [email protected].

Comments