Welcome to this week's edition of Data Phoenix Digest! This newsletter keeps you up-to-date on the news in our community and summarizes the top research papers, articles, and news, to keep you track of trends in the Data & AI world!

Be active in our community and join our Slack to discuss the latest news of our community, top research papers, articles, events, jobs, and more...

Click here for details.

Call For Speakers

We regularly host webinars for our global AI and Data community of Engineers, Executives, and Founders. Being a speaker at our events is a great opportunity to share your technical expertise and knowledge with the Data Phoenix community.

To be considered for a speaker in our future events, please fill out this form.

Latest news

- Jua, a startup building the first 'large physics model' of the natural world, raised $16M in a successful seed round.

- Entrust is in exclusive negotiations to acquire Onfido, allegedly for over $400 million.

- Backed by neuroscientists, Elemind came out of stealth and closed a $12M seed round for its AI-assisted wearable.

- Colossyan announces a $22 million Series A round.

- Analytical Alley raised €700k for its AI-driven marketing solution.

- Meta is working on a new standard to label AI-generated images on Facebook, Instagram and Threads.

- FCC declares AI-generated voices 'artificial', making them illegal to use in automated calls.

- The EU launched a consultation containing draft election security mitigations along with other content moderation recommendations.

- The Biden-Harris administration announces first-of-its-kind consortium on AI safety.

- Arize AI releases Phoenix 3.0.

Find details and more news in our weekly review, "This Week in AI".

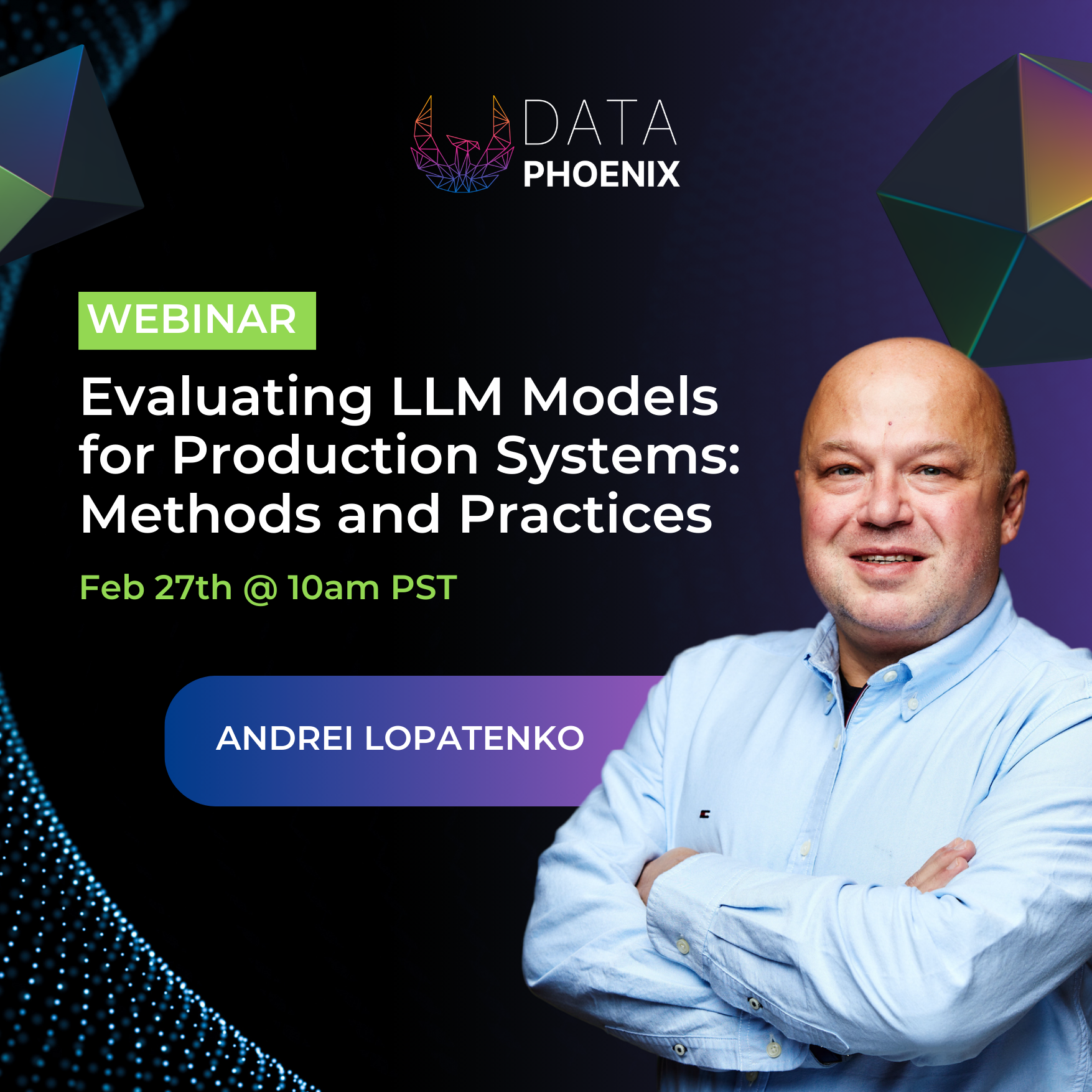

Data Phoenix's upcoming webinar:

Evaluating LLM Models for Production Systems: Methods and Practices

This webinar is designed to offer a comprehensive understanding of the evaluation processes for LLMs, particularly in the context of preparing these models for deployment in production environments.

Key Highlights of the webinar:

- In-Depth Analysis of LLM Evaluation Methods: Gain insights into a variety of methods to evaluate LLM models, understanding their strengths and weaknesses.

- End-to-End Evaluation Techniques: Explore how LLM augmented systems are assessed from a holistic perspective.

- Pragmatic Approach to System Deployment: Learn practical strategies for applying these evaluation techniques to systems intended for real-world application.

- Focused Overview on Critical LLM Aspects: Receive an overview of various evaluation techniques that are essential for assessing the most crucial elements of modern LLM systems.

- Simplifying the Evaluation Process: Understand how to streamline the evaluation process, making the work of LLM scientists more efficient and productive.

Speaker: Dr. Andrei Lopatenko is a seasoned expert and executive leader with over 15 years of experience in the tech industry, focusing on search engines, recommendation systems, and large-scale AI, ML, and NLP applications. He has contributed significantly to major companies like Google, Apple, Walmart, eBay, and Zillow, benefiting billions of customers. Dr. Lopatenko earned his PhD in Computer Science from the University of Manchester. He played a key role in developing Google's search engine, initiating Apple Maps, co-founding a Conversational AI startup acquired by Facebook/Meta, and leading Search, LLM, and Generative AI at Zillow.

Summary of the top articles and papers

Articles

Benchmark and Optimize Endpoint Deployment in Amazon SageMaker JumpStart

When deploying LLMs, ML practitioners care about two measurements for model serving performance: latency and throughput. This article explores their relationship during model serving, accounting for on model architecture, serving configurations, and more.

Low Quality Image Detection—Part 1

Low-quality image detection is an interesting ML problem because it addresses real-world challenges across diverse applications. This article explains how to perform low quality image detection (blur detection, glare detection or noise detection) using ML/DL.

Stock Price Prediction with Quantum Machine Learning in Python

This article dives into the intersection of quantum computing and AI/ML, to compare the performance of a quantum neural network for stock price time series forecasting with a simple single-layer MLP. Explore major challenges and solutions for the task!

Generating Images from Audio with Machine Learning

In this step-by-step guide, you’ll learn how to create amazing images from audio using Machine Learning and Transformers. It explains each step clearly, uncovers the secrets behind Whisper, and highlights the incredible abilities of Hugging Face models.

Sampling for Text Generation

ML models are probabilistic, which makes them great for creative tasks while also causing inconsistencies and hallucinations. To understand why, we need to understand how models generate responses, a process known as sampling (or decoding). Learn more!

Papers & projects

MoE-LLaVA: Mixture of Experts for Large Vision-Language Models

MoE-Tuning is a simple training strategy for Large Vision-Language Models (LVLMs). This strategy addresses the common issue of performance degradation in multi-modal sparsity learning, consequently constructing a sparse model with an outrageous number of parameters but a constant computational cost. Learn more about it!

InstantID: Zero-shot Identity-Preserving Generation in Seconds

InstantID is a powerful diffusion model-based solution. Its plug-and-play module handles image personalization in various styles using a single facial image, while ensuring high fidelity. InstantID demonstrates exceptional performance and efficiency, proving highly beneficial in real-world applications where identity preservation is paramount.

YOLO-World: Real-Time Open-Vocabulary Object Detection

YOLO-World is an innovative approach that enhances YOLO with open-vocabulary detection capabilities through vision-language modeling and pre-training on large-scale datasets. It excels in detecting a wide range of objects in a zero-shot manner with high efficiency, outperforming many state-of-the-art methods in terms of both accuracy and speed.

AesBench: An Expert Benchmark for Multimodal Large Language Models on Image Aesthetics Perception

AesBench is an expert benchmark aiming to comprehensively evaluate the aesthetic perception capacities of multimodal large language models (MLLMs) through elaborate design across dual facets. MLLMs only possess rudimentary aesthetic perception ability, and the authors hope that their work will encourage others to explore and address the problem.

OMG-Seg: Is One Model Good Enough For All Segmentation?

OMG-Seg is One Model that is Good enough to efficiently and effectively handle all the segmentation tasks, including image semantic, instance, and panoptic segmentation, as well as their video counterparts, open vocabulary settings, prompt-driven, interactive segmentation like SAM, and video object segmentation. Learn more about it!

Comments