I wish to thank every single one of our subscribers for your support and wish you all Happy Holidays!

Dmitry Spodarets

ARTICLES

AI Image Generator — Imaged from Thin Air

Artificial-intelligence-generated imagery, a rapidly emerging technology, now in the hands of anyone with a smart phone. This non-technical article explains how AI can generate images literally from thin air. Check it out!

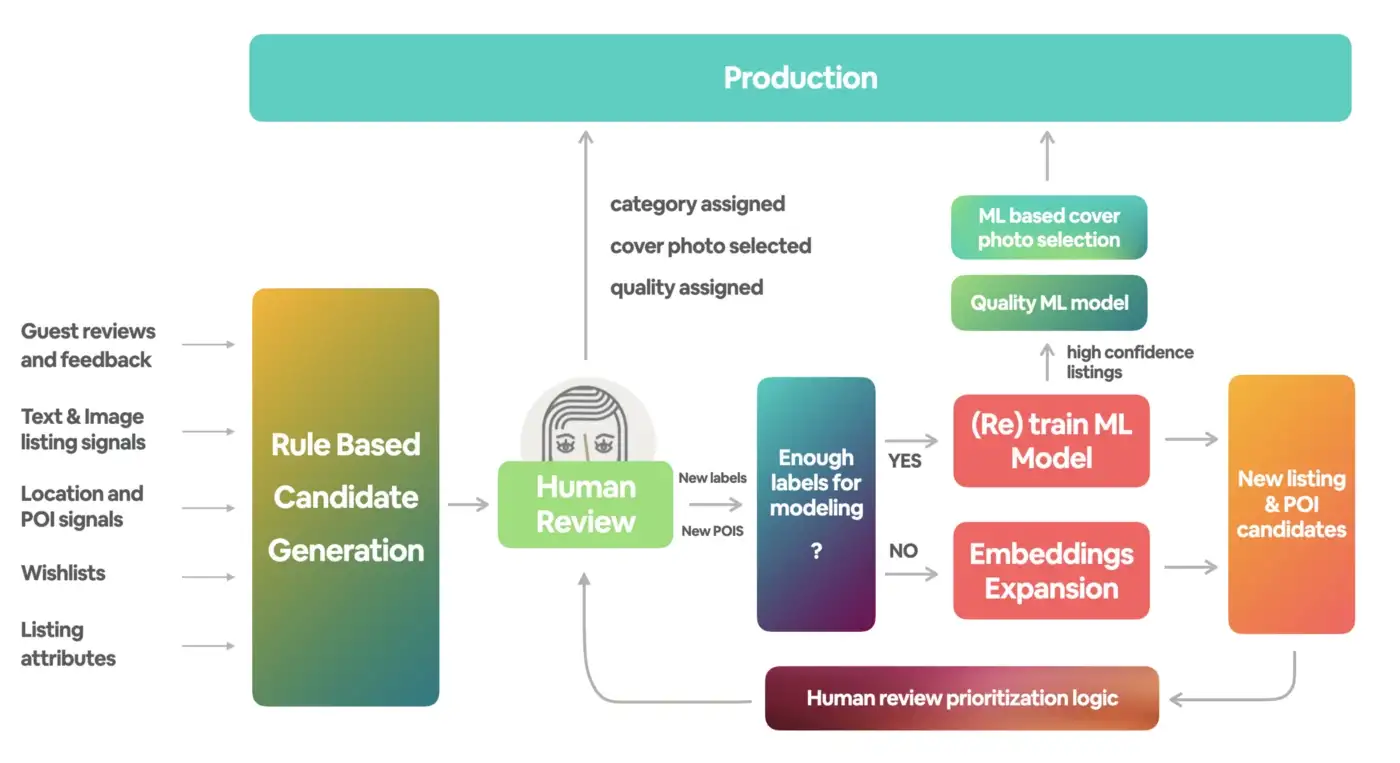

Building Airbnb Categories with ML and Human-in-the-Loop

This high-level introductory post explains how Airbnb applied ML to build out the listing collections and to solve different tasks related to the browsing experience–specifically, quality estimation, photo selection and ranking. Learn more about Airbnb’s innovation of travel experience!

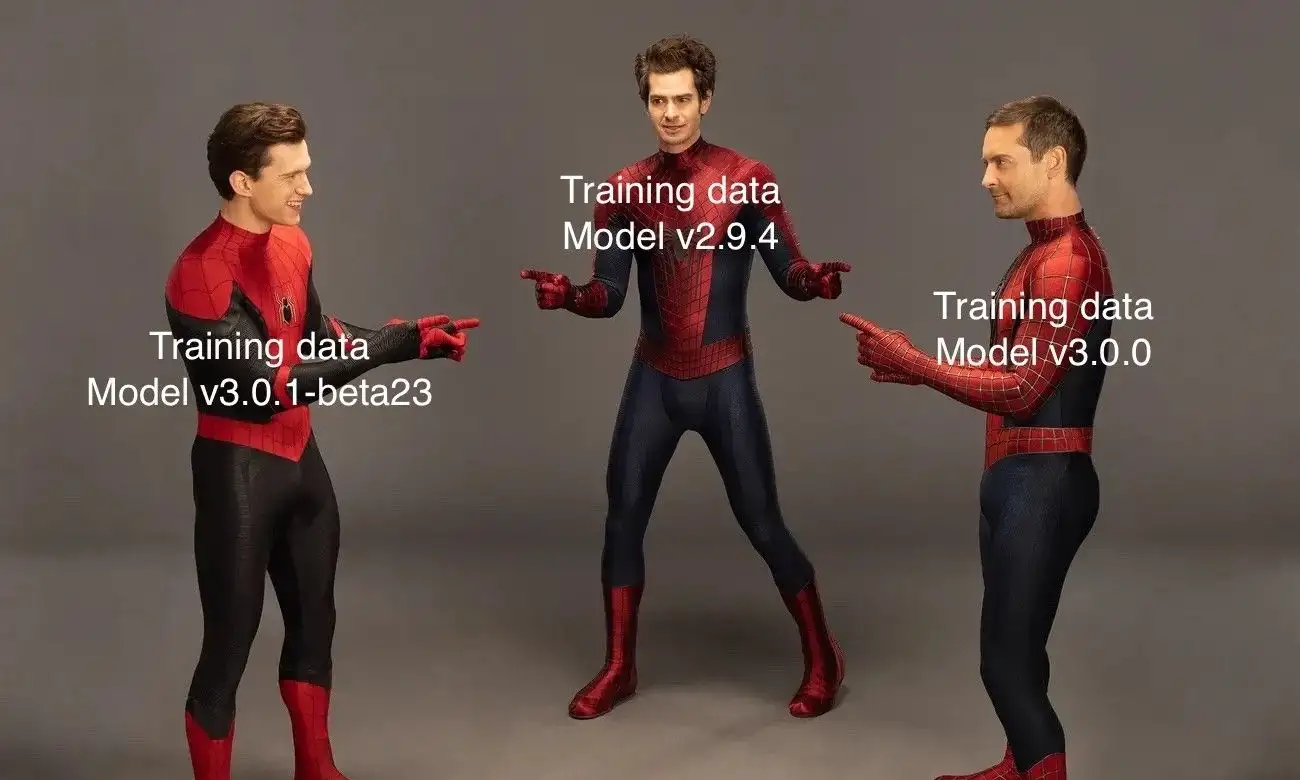

Data Versioning for ML in Airflow

Airflow at its core is a workflow orchestration too. There are hundreds of open source integrations that make it easy to build a workflow without much code. In this article, the author dives deeper into how they enhanced Airflow to support data versioning for Machine Learning.

The Illustrated Stable Diffusion

AI image generation is the most recent AI capability blowing people’s minds. The release of Stable Diffusion is a clear milestone in this development, because it made a high-performance model available to the masses. This is a gentle introduction to how Stable Diffusion works.

Guide to Building AWS Lambda Functions from ECR Images to Manage SageMaker Inference Endpoints

In this article, the author breaks down the process of building a lambda function for machine-learning API endpoints. This tutorial is part of a series about building hardware-optimized SageMaker endpoints with the Intel AI Analytics Toolkit. Check it out!

How AI Text Generation Models Are Reshaping Customer Support at Airbnb

One of the fastest-growing areas in modern Artificial Intelligence (AI) is AI text generation models. Airbnb has heavily invested in AI text generation models, which has enabled many new capabilities and use cases. This article discusses three of these use cases in detail.

Three Ways To Aggregate Data In PySpark

Apache Spark is a data processing engine, exceptionally fast at performing aggregations on large datasets. In this tutorial, the author shares three methods to perform aggregations on a PySpark DataFrame, using Python and explain when it make sense to use each one of them.

Into TheTransformer

The Transformer has proved to be state-of-the-art in the field of NLP and subsequently made its way into CV. This article covers the dimensions of all the sub-layers in the Transformer and the total number of parameters involved in a sample Transformer model is calculated.

PAPERS & PROJECTS

3DHumanGAN: Towards Photo-realistic 3D-Aware Human Image Generation

3DHumanGAN is a 3D-aware generative adversarial network (GAN) that synthesizes images of full-body humans with consistent appearances under different view-angles and body-poses. The model is adversarially learned from a collection of web images needless of manual annotation.

Novel View Synthesis with Diffusion Models

3DiM is a diffusion model for 3D novel view synthesis from as few as a single image. Comparing it to the SRN ShapeNet dataset, it is clear that 3DiM's generated videos from a single view achieve much higher fidelity while being approximately 3D consistent. Tap into the results!

RANA: Relightable Articulated Neural Avatars

RANA is a relightable and articulated neural avatar for the photorealistic synthesis of humans under arbitrary viewpoints, body poses, and lighting. It is a novel framework to model humans while disentangling their geometry, texture, and lighting environment from monocular RGB videos.

UDE: A Unified Driving Engine for Human Motion Generation

Unified Driving Engine (UDE) is the first unified driving engine that enables generating human motion sequences from natural language or audio sequences. It can support both text-driven and audio-driven human motion generation. Check out how it works!

What do Vision Transformers Learn? A Visual Exploration

In this paper, the researchers address the obstacles to performing visualizations on ViTs. Assisted by these solutions, they observe that neurons in ViTs trained with language model supervision are activated by semantic concepts rather than visual features. Learn more!

RT-1: Robotics Transformer for Real-World Control at Scale

In this paper, the authors present a model class, dubbed Robotics Transformer, that exhibits promising scalable model properties. They study different model classes and their ability to generalize as a function of the data size, model size, and data diversity.

COURSES

Deep Learning Course [University of Geneva]

This course is a thorough introduction to DL, with examples in PyTorch. It encompasses such topics as machine learning objectives and main challenges, tensor operations, automatic differentiation, gradient descent, deep-learning specific techniques, generative, recurrent, attention models.

BOOKS

The Principles of Deep Learning Theory

This book by Daniel A. Roberts, Sho Yaida, Boris Hanin develops an effective theory approach to understanding deep neural networks of practical relevance. Check out the book for free to learn about an effective modern theory approach to understanding neural networks.

Comments