TitanML, a startup that enables enterprises to deploy LLMs efficiently and effortlessly, has just raised $2.8 million in a pre-seed round led by Octopus Ventures, with the participation of some angel investors. The company claims that its software, Titan Takeoff, makes deploying LLMs easier, faster, and cheaper for ML teams in enterprise settings.

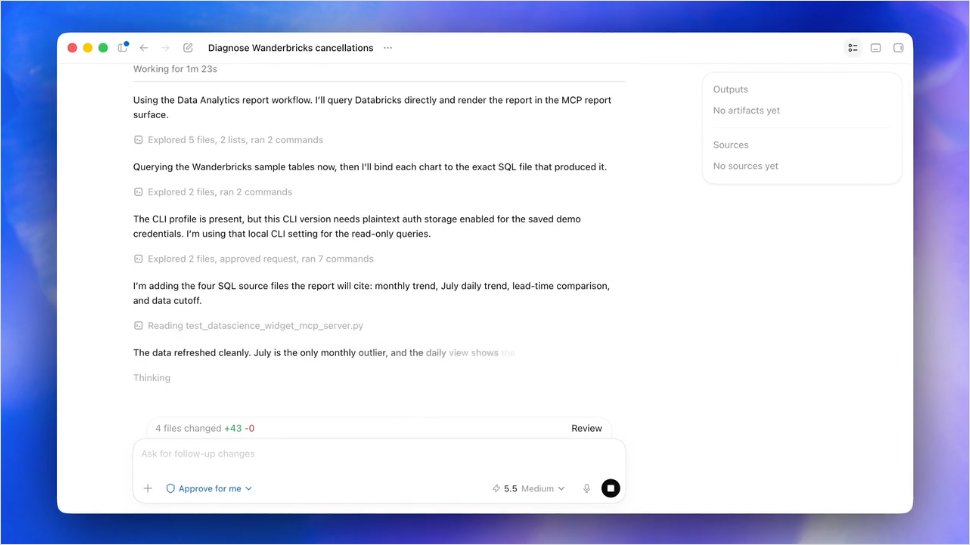

Driven by the ongoing battle to secure enough computing power for AI development and applications, TitanML has identified some of the best practices that help teams make their AI applications faster, more efficient, and, as a result, cheaper. Among the recommendations, TitanML replicates the trend of ditching large models such as GPT-4 and focusing on smaller fine-tuned models trained for the intended application. On the more technical side, they discuss good (computational) hygiene, along with compression, optimization, and acceleration techniques. The downside to the latter is that they are too complicated for teams in an enterprise setting to apply. Thus, the attractiveness of Titan Takeoff lies in that the software automates all these tasks so teams can effortlessly benefit from the optimization and compression of their models before deploying them.

Currently, TitanML is offering a two-level pricing on their product. There is a free community version aimed at individuals looking to self-host LLMs. The community version features inference optimization for single GPU deployment, rapid prompt experimentation with chat and playground UI, and generation parameter control, among other features. The pro version is priced per use case and comprises a monthly fee plus an annual license while the models are in production. The pro version includes every feature in the community version and additional ones like multi-GPU and multi-model deployment, personalized onboarding, and dedicated support.

Comments